Inside the Lab

How the AI labs are industrializing task automation and reshaping the software industry

This year has been a tough time to own tech stocks. Software? Dead. Mega cap tech? Too much capex. Consumer internet? Agents will use the internet in the future, not humans.

A publicly-traded venture fund with just under half its assets in Anthropic, Databricks, and OpenAI?

Up 15x in a week. At least before falling back down 70% from its peak.

Meanwhile, rumors abound on Friday as Anthropic apparently leaked the drop of its latest model, “Mythos” (couldn’t they have picked a less ominous name?). Rumored to cost $10B to run, the model is allegedly in a new class of models beyond Anthropic’s state of the art Opus models.

The rumors around Mythos sent stocks down on Friday, with the S&P500 down -1.7%, and software down over -3.5% for the day. Are we living through Citrini 2028?

To paraphrase The Razor’s Edge blog post this week - the market has basically given up trying to figure out how AI is going to reshape the economy - but whatever it is, it’s decided the impact is going to be BIG.

And yet, for as much as the AI labs and their products - Google’s Gemini, Anthropic’s Claude, and OpenAI’s ChatGPT - have come to dominate the economy and our digital lives, we know remarkably little about how they think about the major questions facing the software industry and startup ecosystem.

Last week my team and I hosted a virtual roundtable with a product leader at one of the major AI labs, responsible for overseeing one of their most impactful AI products, loved by millions of users.

The goal: Get an insider’s perspective on how the AI labs are thinking about building new products, driving customer adoption, and what it all means for the early-stage tech ecosystem.

RL Environments & the AI Labs

The first major takeaway from our conversation: The AI labs are currently mapping the knowledge work economy to reinforcement learning (RL) environments. If I lost you with that sentence, stay with me.

A reinforcement learning environment is essentially a sandbox in which AI can be trained on a particular task through iterative feedback. For example, say an AI lab wanted to build an AI agent with the ability to write investment memos for private equity firms.

First, they would fine-tune the model on thousands of real investment memos, so the AI learns the proper format, structure, and style.

Then, just like an investment associate, the AI is given a set of financial data on a company and is asked to build its own investment memo.

Once the AI generates the investment memo, the AI lab has humans with experience in private equity grade the memo and give feedback - “the investment recommendation is too short”, “it missed a key risk”, “the style is wrong”, or “this factor was not included in the analysis”.

Then the process is repeated: Give the AI financial data on a company, ask the AI to generate an investment memo, ask human graders to judge the investment memo, and give the feedback back to the AI. Again, and again, and again.

This is the simplified version of a process known as reinforcement learning with human feedback (RLHF).

In practice, today the most advanced RL environments go further. They don’t just ask a model to generate a document and have humans grade it. They actually put the AI agent in a simulated workspace where it has to take dozens of actions, like pulling data from an SEC filing, build a model in an Excel spreadsheet, synthesize information a pitch deck, and generate a final investment memo. It’s like dropping an analyst into a virtual office and seeing if they can do the job.

The concept of the RL “environment” is that idea of that virtual space - like a knowledge worker’s desktop computer setup - in which the AI agent has access to the internet, files, data, and tools that a human would have in doing their job.

An RL environment for building an agent that could shop on behalf of humans would involve setting up a virtual online store where the agent could browse, add items to a cart, and check out.

An RL environment for a legal AI agent would involve placing it in a simulated due diligence workflow. Reviewing contracts, flagging non-standard clauses, cross-referencing terms against a regulatory database, and producing a summary memo for a partner to review.

For a marketing AI agent, an RL environment could consist of giving the AI a product brief, access to a simulated ad platform, and asking it to write copy, select audience segments, set bid parameters, and launch a campaign. And then grading it on whether the campaign hit its target KPIs.

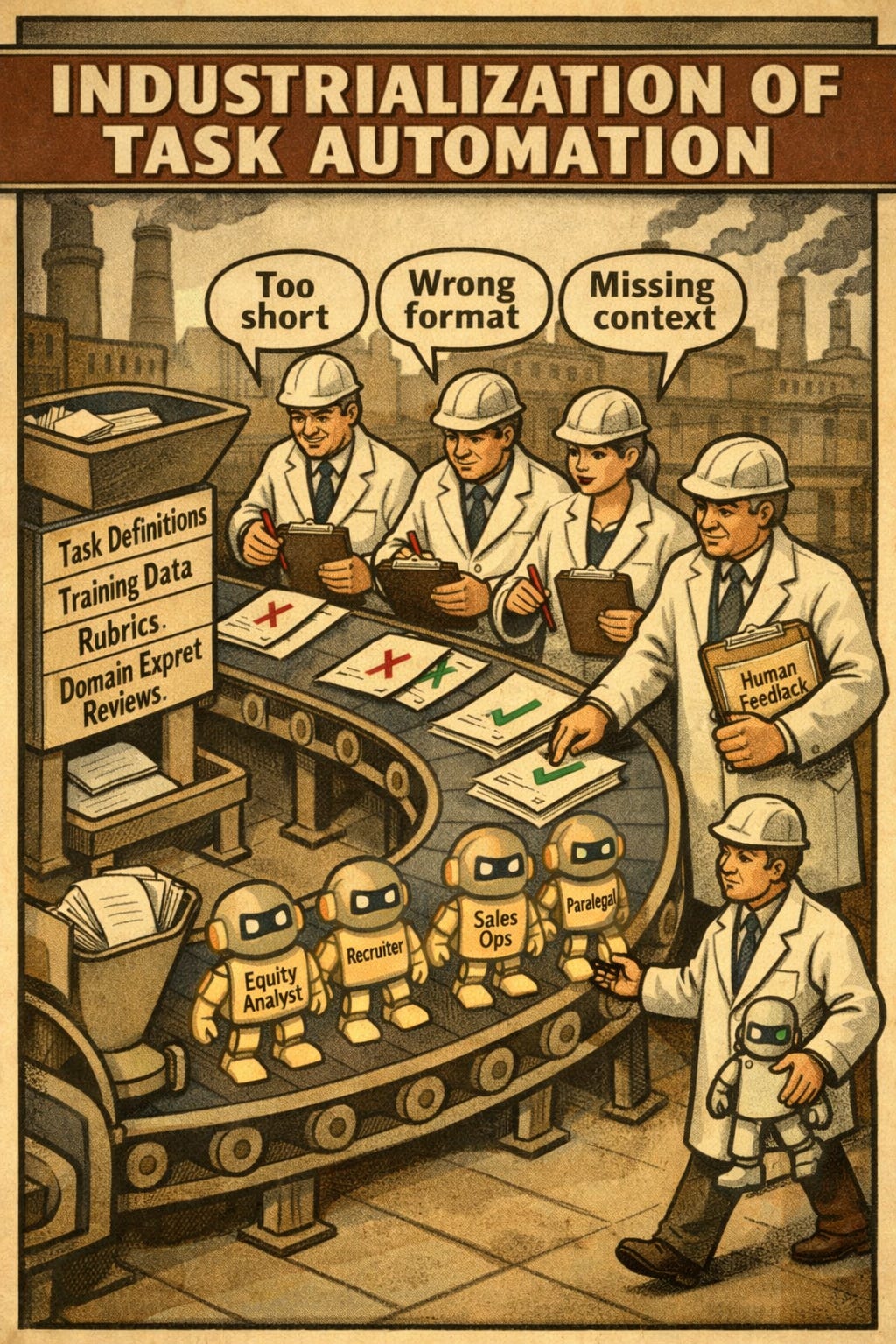

The Industrialization of Task Automation

Reinforcement learning is one of the cutting edge techniques in training AI that’s enabled it to get as good at software development as most human engineers.

Software development was the first knowledge work / human domain that AI has become that good at, in part because there is so much code readily available that can be used as data to train the AI.

More simply, data is the raw material for training AI.

And the AI labs are spending a LOT on data.

Check out the post below, which went viral on X last week. The numbers are from Claude, so their accuracy is unclear. But most of the debate in the comments is that these numbers - $3B on the low end spent by the AI labs on buying data - are too low.

But what is all this data they’re buying? Very specific, domain-focused data that can be used to build RL environments.

As I mentioned, software engineering was only the first domain that the AI labs focused on automating. Today, if you ask cutting-edge software companies what percentage of their code is produced by AI, you’ll likely hear upwards of 80-90%.

So what comes after software? Everything.

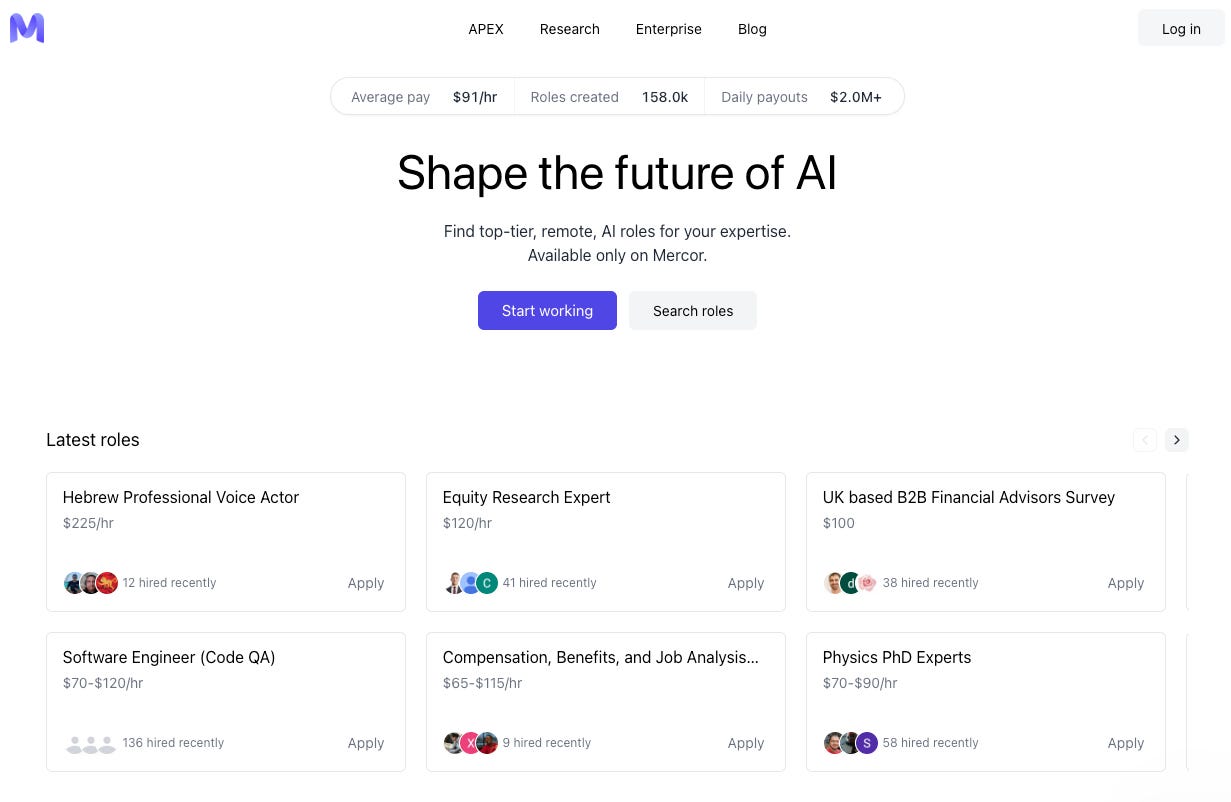

The AI labs pay companies like Mercor to find domain experts who can help them generate domain-specific data, review the AI model’s output in a specific domain, and help the AI models close the gap from being 60% as good as a human to 95% as good as a human. A quick glance at Mercor’s homepage shows them recruiting experts to generate data in domains ranging from “Hebrew Professional Voice Actor” to “Physics PhD Experts” to “UK based B2B Financial Advisors”.

Anthropic already has plugins for financial services - my earlier example of AI writing private equity memos wasn’t hypothetical. They aren’t replacing a private equity associate yet, but over the course of this year, the incremental junior hire will be increasingly weighed against the out-of-the-box capabilities provided by the major AI labs.

The same process that made AI as good as most software engineers at writing code is now being replicated across the knowledge work economy - anywhere that has data to train on.

The product leader we spoke with was crystal clear. If a task produces an output that can be defined with a rubric and graded by an expert, then it’s in the crosshairs of automation from the AI labs.

The AI labs are coloring in the map of the knowledge work economy. They’re building RL environments one job function at a time, until no space on the map of the economy is left blank. Not dozens of tasks, but hundreds, if not thousands.

If that sounds like a research project - it’s not. Think of it more like an industrialization program. The volume, quality, and breadth of what AI models can do is going to explode this year as a result.

So if you’re a knowledge worker reading this - a VP of marketing, a lawyer, a product designer, a consultant, an accountant - the question isn’t whether or not your job function will eventually be colored in.

The AI labs don’t announce the RL environments they’re building and which specific capabilities the models are going to get good at next - they’re just shipping. One day your AI agent can’t do your job, the next day you wake up and Anthropic shipped a Claude Cowork plugin that can handle half your workflows.

The people who will thrive in their jobs over the next decade aren’t waiting for that day. They’re living inside of Claude, ChatGPT, and Gemini, and building an intuition for how AI can help them at work and where it falls short.

AI fluency is already leading to a divide among workers, as recent data from Anthropic shows: users who've been on Claude for six months or more have a 10% higher success rate in their conversations with it, even after controlling for the type of work they're doing.

The early adopters are pulling ahead, and the gap will grow.

The SaaS Selloff is an Overreaction, but the Warning is Real

The industrialization of task automation is the backdrop by which the entire “is software dead?” debate needs to be understood.

In The Great SaaS Crash of 2026, my core thesis was that software is not dead, but software companies that don’t rearchitect for an agent-first world will be.

I put two of the major software bear cases to our product leader guest directly:

1) If everyone can vibe code their software, why would they buy from a vendor?

2) If agents become the front door for knowledge work, do software companies shift from being valuable workflow systems, to fading into the background as mere data providers?

His answers at once confirmed what I’ve argued in blog posts previously, and created even more concern for me as I look at the landscape of incumbent software vendors.

On the vibe coding bear case

Vibe coding has become so powerful that most of us in the tech industry have that one friend who loves talking about the projects he’s vibe coding. Yes, if you ask my friends, I am that guy - but that’s a story for another post.

Our guest cited examples from his own network - one friend who built his own lightweight Bloomberg terminal to track stocks, and another who built an SAT tutoring app for his kid.

He explained that while those are interesting examples, they reflect the top 1% of power users.

Most users of software can’t actually conceive of how a product could be dramatically better for their needs. Even if someone intuitively feels that a product is clunky or overly complicated, translating that dissatisfaction into a design spec is an entirely different skill. And if you can’t articulate your vision of a better product, you’ll end up rebuilding whatever you currently use - wasting resources instead of just paying a vendor like HubSpot to provide that software app for you.

The bottleneck in building great software was never technical ability, but rather imagination - and you can’t prompt your way past that (at least, for now).

So the eternal buy vs. build question boils down to whether its a simple, self-contained use case where the app doesn’t do much. For those use cases, “build” will absolutely win more today than it used to.

But most large, valuable software companies don’t provide products that fall into that description. They are deeply embedded in workflows, have proprietary data, tacit knowledge from the users, and have been built to handle the thousands of edge cases that exist in organizational design across large enterprises. They’re not going to be vibe coded away.

The front door bear case

But what happens if ChatGPT and Claude become the front door to software applications, the same way that Google became the front door to websites in the 2000s?

Here, our guest was more bearish on software than I expected.

He explained that ephemeral user interfaces - dynamic UIs that appear inside of general chat agents on the fly - are coming this year. In fact, Anthropic just announced recently that Claude has new capabilities around building custom visuals. I bet OpenAI will follow suit soon.

Today, if you give Claude data and tell it to build you a chart - it can create a beautiful visualization for you.

But it goes further than charts. He described a future where AI agents will be able to generate interactive interfaces with familiar features. Buttons, input fields, and patterns that look and feel like the SaaS apps their users are already familiar with. If a user doesn’t know how to delegate a task to the AI, the agent will generate a UI to make it intuitive. For example, a project management board to help delegate work across a team.

These functional interfaces pull live data from the software systems underneath via MCP connectors, and then disappear when the user is done.

As the product leader we spoke with put it, the days of having 10 tabs open with different software applications are likely coming to an end. AI agents will increasingly become the front door in which knowledge work gets done.

Also importantly - our guest suggested that context windows that are functionally infinite are likely coming soon. The AI labs are working on models with much better memory and capabilities around how to use a knowledge base. If AI agents not only can access data in software and create ephemeral user interfaces, but also have perfect memory of everything a user does in every tool - that creates the potential for agents to handle far more complex multi-tool workflows.

The implication here that the market is likely pricing in with software companies trading at rock-bottom multiples is that if the user interaction layer moves from software applications to AI agents, then software companies are at risk of losing their end-user relationship. They become infrastructure, and while their data and ability to handle edge-cases still have value, it’s likely a lower value than the front-and-center position that enterprise software companies have had over the last 30 years.

When Google became the front door to the internet, websites didn't disappear. But it didn't matter how beautiful your website was or how strong your brand. Google captured distribution, discovery, and monetization. You were subservient to the interface layer. The same structural dynamic is threatening to play out between AI agents and software companies - only faster.

How incumbent software providers can position themselves

The opportunity for incumbent software vendors is to build the AI workflows that their users are demanding.

But most are blowing the opportunity.

Our guest described how most software vendors are tacking on a chatbot to their software application and calling it AI. He cited Salesforce as an example.

But what if the end user of software applications becomes an agent, not a human?

On that topic, he shared a story that every software executive should hear. He explained how the company standardized their communication on a collaboration software vendor. When they wanted to integrate their AI agents into the collaboration tool so that their human employees could talk to AI, the vendor shut it down - restricting them from using AI to search, retrieve information, or automate workflows inside of the tool.

The AI lab’s reaction wasn’t to work around the software vendor’s restrictions, but instead to ask themselves whether they should just build their own internal version. This is one of the key risks software bears have been warning about.

Some vendors like Workday are starting to charge customers to connect different systems, including AI, to their data. This makes sense - only if the software is fully optimized and open for AI agents to use the platform, on equal footing with their human counterparts.

If they don’t accept agents as first-class users in their tools, then software vendors will open themselves up to competitors who will - either existing vendors or internally-built tooling.

What This Means

The AI labs are simultaneously getting better at doing the work of knowledge workers and becoming the place where said work gets done.

For companies in the software/AI ecosystem - both established and startups alike - the only response is to build a system that gets more valuable/effective as the models get better. Because as Anthropic just teased with Mythos - they’re about to get a lot better.

For knowledge workers, AI labs are building the infrastructure to come for every routine part of your job. The people who embrace the AI models frontier capabilities as a productivity tool will be 10x as productive. Early adopters are pulling ahead, and the gap is widening.

I opened this piece by noting that the market has essentially decided AI’s impact is going to be enormous, even if it can’t articulate how. After sitting across from someone who’s helped build one of the products at the center of that transformation, it seems like the market is right. But the way it unfolds will be more targeted, more structural, and faster than many realize.

This is Part 1 of a two-part series. Part 2 will cover what our conversation revealed about how to build AI products, and how startups can embrace AI to get ahead.